AI Voice Cloning in 2026: How It Works and Is It Safe?

Imagine receiving a phone call from your parent’s voice asking you to wire money urgently — except your parent is sitting right beside you. Or hearing your favorite celebrity endorse a product in their own unmistakable voice — except they never said a word. In 2026, these scenarios are not hypothetical. They are happening daily across the globe, made possible by the extraordinary and deeply unsettling power of AI voice cloning. This guide explains exactly how AI voice cloning works, where it is being used legitimately, what risks it poses, and how individuals and organizations can protect themselves in an era where your voice may no longer be your own.

What Is AI Voice Cloning?

AI voice cloning is the process of using artificial intelligence to create a synthetic, highly realistic replica of a person’s voice — capable of saying anything the operator inputs, in any language, with the original speaker’s tone, cadence, accent, and emotional range preserved. Unlike simple text-to-speech systems that produce robotic, generic audio, modern AI voice cloning systems produce output that is virtually indistinguishable from authentic human speech — even to people who know the original speaker personally.

The technology has advanced at breathtaking speed. In 2020, convincing AI voice cloning required hours of training audio. By 2023, it required minutes. In 2026, leading AI voice cloning platforms can produce a convincing vocal replica from as little as three to five seconds of source audio — a clip from a social media video, a voicemail message, or a recorded phone call is sufficient.

🔊 How Little Audio Is Needed in 2026?

Leading AI voice cloning platforms in 2026 — including ElevenLabs, Resemble AI, and Descript Overdub — require as little as 3–10 seconds of source audio to generate a functional voice clone. For high-fidelity studio-quality replication, 30–120 seconds of clean audio produces near-perfect results.

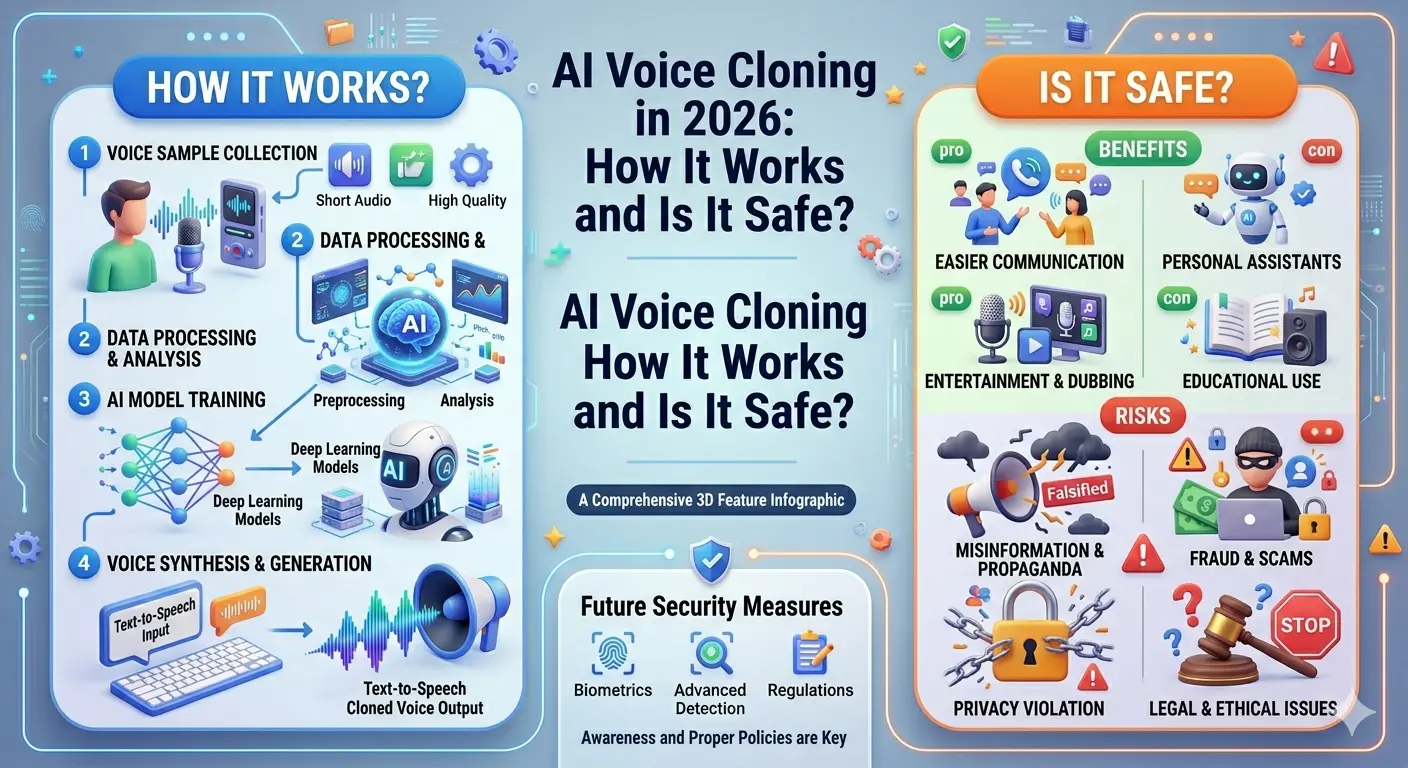

How AI Voice Cloning Works: The Technology Explained

The Scale of AI Voice Cloning in 2026

Legitimate Uses of AI Voice Cloning

Despite its risks, AI voice cloning serves a range of genuinely valuable and ethical applications when deployed responsibly with proper consent:

1. Accessibility and Medical Applications

For individuals with ALS, Parkinson’s disease, throat cancer, or other conditions that progressively affect speech, AI voice cloning offers a profoundly meaningful capability: the ability to bank their voice before losing it — and continue communicating in their own voice through text-to-speech synthesis afterward. Organizations like VocaliD and Project Revoice use AI voice cloning specifically for this compassionate application.

2. Content Creation and Media Production

Podcasters, audiobook narrators, and video creators use AI voice cloning to fix mispronunciations, fill audio gaps, and localize content into multiple languages without recording entire sessions again. Film and television studios use the technology to restore dialogue damaged in production or to posthumously give voice to archival footage of historical figures — with appropriate estate permissions.

3. Customer Service and Business Automation

Enterprises deploy AI voice cloning to create branded voice assistants — giving their customer service chatbots, IVR systems, and virtual assistants a consistent, human-sounding branded voice that can dynamically respond to any customer query without requiring a human voice actor for every possible phrase.

4. Language Learning and Education

Educational platforms use AI voice cloning to provide learners with native-speaker quality pronunciation examples in any language, adapted to the specific vocabulary and sentence structures being taught — removing the dependency on limited recorded audio libraries.

The Dangerous Side: AI Voice Cloning Risks in 2026

Critical Warning: The Scale of Voice Fraud Is Unprecedented

In 2025–2026, law enforcement agencies across the United States, United Kingdom, India, Australia, and the European Union reported a 25% year-over-year increase in financial fraud cases where AI voice cloning was used to impersonate family members, bank officials, government representatives, and corporate executives.

Read More:Portable Power Stations: The Ultimate Guide to Off-Grid Energy in 2026

Vishing and Telephone Fraud

The most widespread malicious use of AI voice cloning is telephone fraud — criminals clone a family member’s or colleague’s voice and call targets claiming emergencies requiring urgent wire transfers. These attacks are devastatingly effective precisely because the emotional authenticity of a cloned voice bypasses rational skepticism in a way that a text message cannot.

Non-Consensual Voice Deepfakes

Public figures, executives, and celebrities face a specific threat: their voices — extensively sampled across years of public appearances — are the easiest targets for AI voice cloning. Non-consensual voice deepfakes are used to create fake statements, fake endorsements, and fabricated audio evidence that can damage reputations, manipulate markets, and destabilize public discourse.

Authentication Bypass

Financial institutions and security systems that rely on voice biometrics for authentication face a direct threat from AI voice cloning. Synthesized voice samples that match a registered vocal profile can unlock accounts, authorize transactions, and bypass identity verification systems — a risk that has forced major banks to accelerate the development of liveness detection and anti-spoofing countermeasures.

Use Cases: Safe vs. Unsafe Applications

| Use Case | Consent | Ethical? | Legal Risk | Verdict |

|---|---|---|---|---|

| Medical voice banking (own voice) | Full self-consent | ✔ Yes | None | ✔ Fully Safe |

| Audiobook narration (licensed) | Narrator contract | ✔ Yes | Low | ✔ Safe |

| Branded customer service voice | Voice actor consent | ✔ Yes | Low if contracted | ✔ Safe |

| Celebrity voice without consent | None | ✘ No | Very High | ✘ Dangerous |

| Telephone fraud / vishing | None | ✘ No | Criminal | ✘ Illegal |

| Political deepfake audio | None | ✘ No | Criminal in many countries | ✘ Illegal |

| Personal content (own voice) | Self-consent | ✔ Yes | None | ✔ Safe |

How to Protect Yourself from AI Voice Cloning Threats

🛡️ Personal Protection Checklist — 2026

Regulatory Note

The EU AI Act (fully enforced from 2026) classifies malicious AI voice cloning as a high-risk AI application subject to strict transparency requirements. In the USA, multiple states — including California, Texas, and New York — have enacted specific laws criminalizing non-consensual voice cloning for fraud, defamation, or electoral interference. Verify the legal landscape in your specific jurisdiction before deploying any voice cloning technology.

The Future of AI Voice Cloning: What’s Coming Next

The arms race between AI voice cloning capability and detection technology is intensifying rapidly. On the defensive side, AI-powered liveness detection — which identifies synthetic audio through micro-level acoustic artifacts invisible to human ears — is becoming standard in financial and security contexts. Vocal watermarking technologies, which embed imperceptible signatures in AI-generated audio that can identify its origin, are being deployed by responsible AI voice cloning platforms as a standard ethical safeguard.

On the offensive side, next-generation AI voice cloning models are developing countermeasures against these detectors — an ongoing technological escalation with no clear resolution in sight. The consensus among AI safety researchers is that societal adaptation — through awareness, legal frameworks, verification protocols, and technological countermeasures deployed together — offers the only realistic path to managing the risks of AI voice cloning without forgoing its genuine benefits.

Conclusion

AI voice cloning is one of the most powerful, most beneficial, and most dangerous technologies active in the world in 2026. Its ability to restore voices to those who have lost them, enable creative content at scale, and power personalized communication is genuinely extraordinary. Its potential to defraud, defame, manipulate, and deceive is equally extraordinary — and equally real. Understanding AI voice cloning — how it works, where it is used responsibly, and how to defend against its misuse — is no longer a technical curiosity. In 2026, it is an essential component of digital literacy for every individual, family, and organization navigating a world where you can no longer trust everything you hear.

Frequently Asked Questions

Meet Md. Rubel Rana

As a core contributor to Worlddincidents.com, Rubel Rana brings a unique perspective to the world of journalism. Whether it’s deep-diving into historical trivia or covering the latest global headlines, Rubel Rana is committed to delivering high-quality, high-impact articles. Their writing blends meticulous research with a compelling voice, helping readers stay informed and curious about the world around them.