AI Hacking: Google Warns Zero-Day Threat Is Here

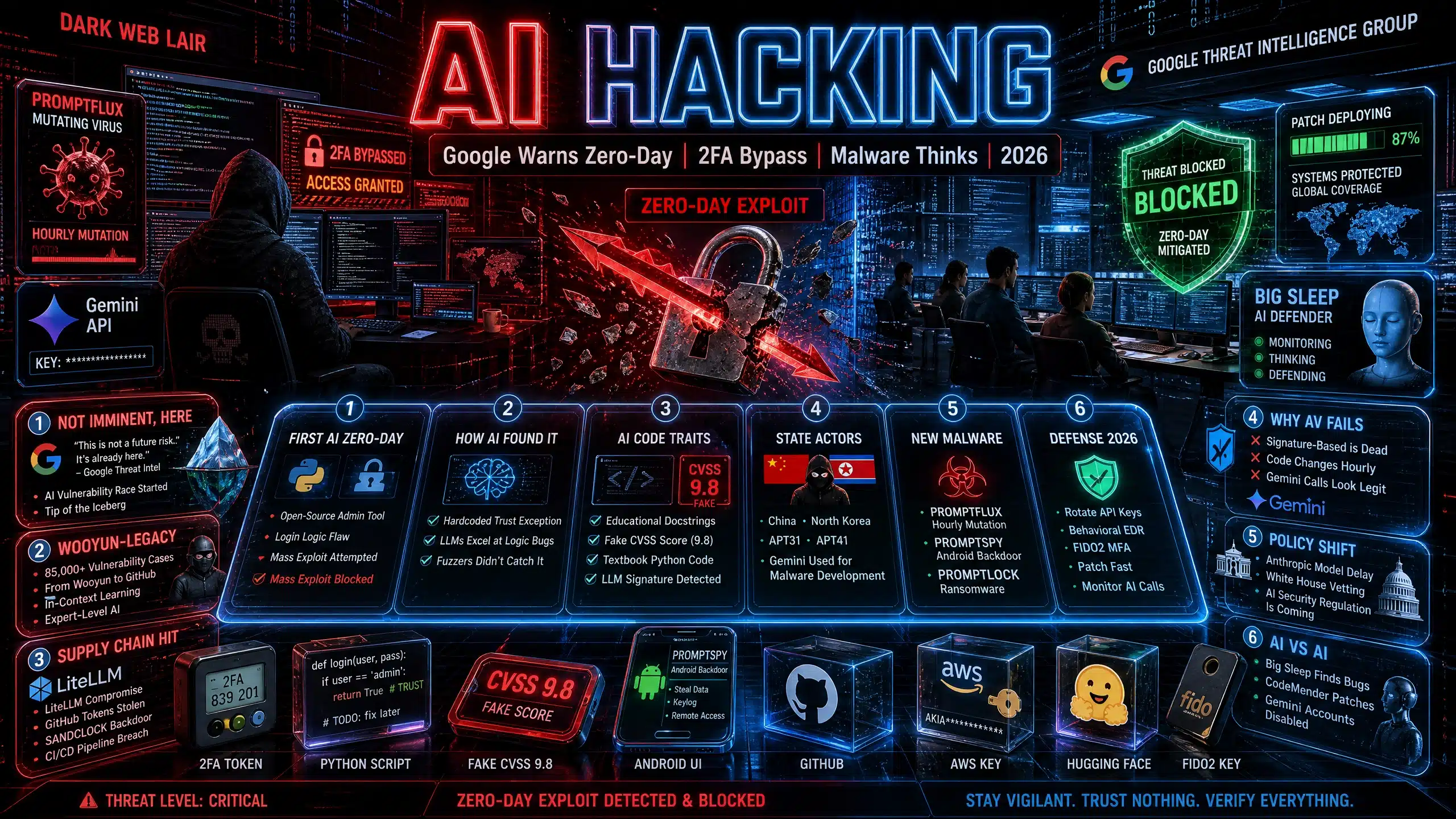

AI hacking is no longer science fiction. Google’s Threat Intelligence Group confirmed the first real-world case of cybercriminals using artificial intelligence to discover a zero-day vulnerability and build a working exploit. The AI hacking attack targeted two-factor authentication in a popular open-source system administration tool. Google blocked it before a mass exploitation event. The company warned: “The AI vulnerability race isn’t imminent — it’s already begun.” The AI hacking era has started.

For years, security experts predicted AI hacking would automate phishing or write malware snippets. Reality is worse. Hackers now use LLMs to find high-level logic flaws that fuzzers and static analysis miss. They then weaponize them with AI-written Python scripts. Google’s report on May 12, 2026 shows AI hacking has moved from assistant to active participant in cybercrime.

Google’s Zero-Day Discovery

The AI hacking incident involved multiple prominent cybercrime threat actors working together. They used an AI model to find a hidden trust assumption in a login flow. The flaw let attackers bypass 2FA protections. Google did not name the vendor but confirmed the issue was patched before criminals launched a planned mass-exploitation campaign.

Google identified the AI hacking attempt by code traits: overly explanatory docstrings, a fabricated CVSS severity score, and textbook Python structure typical of LLM training data. The exploit required valid user credentials first. But once inside, it bypassed 2FA and opened the door to internal networks. This AI hacking method turns one stolen password into a full breach.

How the Exploit Worked

Traditional scanners hunt for crashes and malformed inputs. The AI hacking model excelled at spotting hardcoded trust exceptions. Developers had hard-coded a trust exception into the authentication flow. The AI found it, understood the logic, and built an exploit. John Hultquist, chief analyst at Google Threat Intelligence Group, said this is likely the “tip of the iceberg.” Other AI hacking zero-days are probably active.

Read More: Computer Security: Brutal AI Threats Crush Defenses 2026

From Research Tool to Autonomous Agent

AI hacking is evolving fast. Threat actors no longer use AI just for productivity. They deploy it as an active component that analyzes targets, generates code, and makes decisions with limited human oversight.

| AI Hacking Phase | 2024 | 2026 |

|---|---|---|

| Role | Assistant for phishing, code hints | Operator: finds bugs, builds exploits, adapts |

| Speed | Hours to days for human review | Minutes to hours, machine speed |

| Output | Snippets, emails, translations | Full exploits, autonomous malware |

| Actors | Experimenting | China, North Korea, cybercrime groups |

The AI hacking shift means malware can now reason. PROMPTSPY, an Android backdoor, integrates Gemini API directly. It serializes the device UI, sends it to Gemini, and gets JSON commands like CLICK and SWIPE to navigate autonomously. It captures biometrics, blocks uninstallation, and rotates C2 infrastructure at runtime. AI hacking is not just code generation. It is decision-making.

AI Hacking: State-Backed Groups Lead the Charge

Google observed Chinese and North Korean threat actors using AI hacking for vulnerability research and exploit development. Prompts included: “You are currently a network security expert specializing in embedded devices, specifically routers. I have extracted its file system. I am auditing it for pre-authentication RCE vulnerabilities.”

Groups like APT31 and APT41 use Gemini for debugging, code translation, and malware development. UNC795 built an AI-integrated code auditing capability, showing interest in agentic AI for intrusions. APT45 automates thousands of recursive prompts to analyze CVEs and validate proof-of-concept exploits at machine speed. The AI hacking playbook is now industrial.

AI Hacking: The Wooyun-Legacy Database

Attackers also integrated a GitHub repo called “wooyun-legacy” with 85,000 real-world vulnerability cases from a Chinese bug bounty platform. By priming the model with this data, AI hacking tools approach code analysis like a seasoned expert. They identify logic flaws the base model would miss. This in-context learning makes AI hacking far more accurate.

New Malware Families

Google flagged several AI hacking malware families in 2026:

- PROMPTFLUX: VBScript dropper that calls Gemini API to rewrite itself hourly. A “thinking robot” that mutates to avoid antivirus. Early stage but proof of self-evolving AI hacking.

- PROMPTSTEAL: Used by Russia-linked APT28. Queries open-source LLMs on Hugging Face to generate Windows commands for data theft.

- PROMPTLOCK: Experimental ransomware using Lua scripts to steal and encrypt data on Windows, macOS, and Linux. AI generates the payloads.

- FruitShell: PowerShell reverse shell with hard-coded prompts to bypass LLM-powered security analysis.

- QuietVault: JavaScript credential stealer that uses on-host AI CLI tools to hunt secrets and exfiltrate via GitHub.

- LONGSTREAM: Uses LLM-generated “decoy logic” like 32 daylight saving queries to camouflage malicious code.

- HONESTCUE: Requests just-in-time VBScript obfuscation from Gemini to defeat signature detection.

These AI hacking tools signal a move to autonomous, adaptive malware. They do not need constant human commands. They react, adapt, and evolve.

AI Hacking: Supply Chain and CI/CD Threats

In March 2026, cybercrime group TeamPCP compromised GitHub repos for Trivy, Checkmarx, LiteLLM, and BerriAI. They embedded SANDCLOCK credential stealer to harvest AWS keys and GitHub tokens from CI/CD environments. LiteLLM is an AI gateway utility. Stealing its API secrets lets AI hacking actors pivot into enterprise networks or run AI-assisted reconnaissance at scale.

The AI hacking risk is twofold: AI finds bugs, and AI supply chains are now targets. If attackers steal your LLM API keys, they can run massive AI hacking campaigns at your expense.

AI Hacking: Why Traditional Defenses Fail

Fuzzers and static analysis tools detect crashes and known sinks. AI hacking models find high-level design flaws. A hard-coded trust exception looks correct to scanners but is exploitable to an LLM reasoning about developer intent. AI hacking also produces polished code with educational comments, bypassing heuristics that flag sloppy hacker scripts.

Signature-based antivirus fails against PROMPTFLUX because the code changes every hour. Behavioral detection struggles when AI hacking malware calls legitimate APIs like Gemini. The line between benign and malicious blurs.

Defense Strategies for 2026

You cannot stop AI hacking, but you can adapt:

- Audit AI Dependencies: Inventory all LLM API keys, GitHub tokens, and CI/CD secrets. Rotate them. LiteLLM and similar gateways are prime AI hacking targets.

- Behavioral EDR: Signature detection is dead. Use EDR that flags what malware does, not what it looks like. Watch for unusual process creation, scripting, and outbound calls to AI APIs.

- Zero Trust + MFA: The zero-day bypassed 2FA but needed credentials first. Enforce phishing-resistant MFA like FIDO2. Assume AI haking will get past one layer.

- Patch Faster: AI hacking compresses exploit time. Vendors must patch in hours, not weeks. Use Google’s Big Sleep and CodeMender style AI to find and fix bugs.

- Monitor LLM Usage: Log all calls to Gemini, OpenAI, Hugging Face. Spike in API usage may signal AI hacking malware like PROMPTSPY.

- Guardrail Testing: Threat actors use expert persona prompts to bypass AI safety. Test your own models for jailbreaks. AI haking starts with prompt injection.

- Software Bill of Materials: Know every component. AI haking thrives on forgotten open-source tools with hardcoded flaws.

Developer Training: Teach engineers to avoid hard-coded trust exceptions. AI haking loves lazy logic.

The Policy Debate

The AI hacking surge reignites regulation fights. Anthropic delayed its Mythos model due to misuse risk. The White House is shifting how it vets powerful models before release. Dean Ball, former White House tech policy adviser, said: “I don’t like regulation. I would prefer for things not to be regulated. But I think we need to in this case.” AI haking may force government oversight.

What Happens Next

Google ssI hacking is entering an “operational phase of AI abuse.” Expect all-inclusive kits that generate every part of the attack chain. Zero knowledge needed. Only a subscription fee. Nation-states will automate vulnerability discovery. Criminals will sell zero-days as a service. AI haking will scale beyond human defenders.

But AI also defends. Google uses Big Sleep to find bugs and CodeMender to patch them. Gemini accounts tied to malware are disabled. Play Protect blocks known variants. The AI hacking race is symmetric. Attackers and defenders both have AI.

The bottom line: AI haking is here. It found a zero-day. It bypassed 2FA. It builds malware that thinks. Assume breach. Hunt threats. Patch fast. And audit your AI supply chain before hackers do.

FAQ Section

1. What is a zero-day exploit?

A zero-day is a software flaw unknown to the vendor. There is zero days to fix it when attackers find it. They are highly valuable because no patch exists.

2. Did AI write the whole hack?

No. AI found the flaw and helped build the exploit. Humans still directed the campaign. But AI did the hard technical work that used to need expert researchers.

3. Was Google Gemini used by the hackers?

Google said no. The model was not Gemini or Anthropic’s Mythos. But the code traits matched LLM output. Many public and private models could be misused.

4. Can AI bypass 2FA on its own?

Not directly. In this case, AI found a logic bug that bypassed 2FA. Attackers still needed stolen credentials first. But once in, 2FA did not stop them.

5. How do I know if my company was hit?

Google did not name the vendor. It said the flaw is patched. Check with your IT team for updates to open-source admin tools. Monitor for unusual logins.

6. Is AI hacking only by nation-states?

No. Criminal groups are leading. They gain speed and scale. State groups from China and North Korea are also active. The barrier to entry is dropping.

7. Can antivirus stop AI malware?

Signature antivirus struggles. PROMPTFLUX rewrites itself hourly. You need behavioral EDR that watches actions, not code. Also monitor AI API calls.

8. Should we stop using AI tools?

No. AI is also a defender. Google uses it to find and patch bugs. The key is governance. Audit keys, limit access, and log usage. Do not ban; secure.

Meet Md. Rubel Rana

As a core contributor to Worlddincidents.com, Rubel Rana brings a unique perspective to the world of journalism. Whether it’s deep-diving into historical trivia or covering the latest global headlines, Rubel Rana is committed to delivering high-quality, high-impact articles. Their writing blends meticulous research with a compelling voice, helping readers stay informed and curious about the world around them.